|

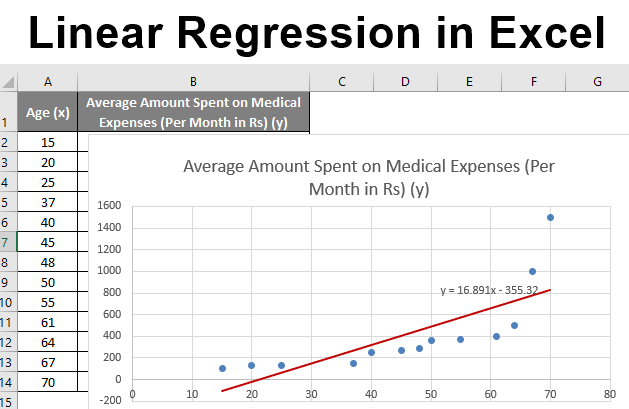

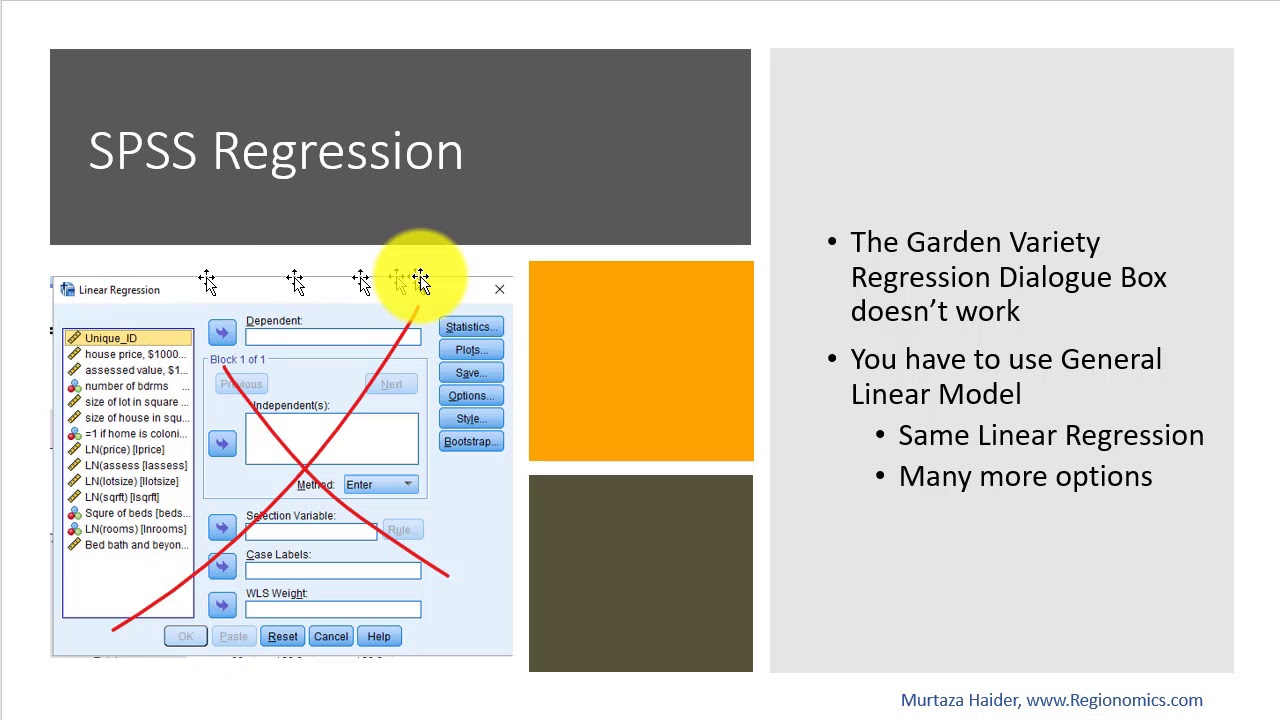

It is fairly clear that Gender could be directly entered into a regression model predicting Salary, because it is dichotomous. Dept Department (1=Family Studies, 2=Biology, 3=Business).Rank (1=Assistant, 2=Associate, 3=Full).When possible, the statistician generally prefers Because of this relationship, uncorrelated predictor variables will be preferred, when possible. Similarly, the R 2 change value for X 1 would always be. It would make no difference at what stage X 2 was entered into the model, the value for R 2 change would always be. The value for R 2 change for X 2 given no variable was in the model would be. The value for R 2 change for X 2 given X 1 was in the model would be. For example, if X 1 and X 2 were uncorrelated (r 12 = 0) and r 1y 2 =. In this case it makes no difference what order the predictor variables are entered into the prediction model. Namely the R 2 change will be equal to the correlation coefficient squared between the added variable and predicted variable. If the additional predictor variables are uncorrelated (r = 0.0) with the predictor variables already in the model, then the result of adding additional variables to the regression model is easy to predict. In some cases, the combined result will provide only a slightly better prediction, while in other cases, a much better prediction than expected will be the outcome of combining two correlated variables. If the additional predictor variables are correlated with the predictor variables already in the model, then the combined results are difficult to predict. The degrees of freedom for the R 2 change test corresponds to the number of variables entered in the block of variables.Ĭorrelated and Uncorrelated Predictor VariablesĪdding variables to a linear regression model will always increase the unadjusted R 2 value. Dichotomous variables can be included in hypothesis tests for R 2 change like any other variable.Ī block of variables can simultaneously be entered into an hierarchical regression analysis and tested as to whether as a whole they significantly increase R 2, given the variables already entered into the regression equation. If the regression weight is negative, then addition and subtraction is reversed. If the dichotomous variable is coded as -1 and 1, then if the regression weight is positive, it is subtracted from the group coded as -1 and added to the group coded as 1. If the dichotomous variable is coded as 0 and 1, the regression weight is added or subtracted to the predicted value of Y depending upon whether it is positive or negative.

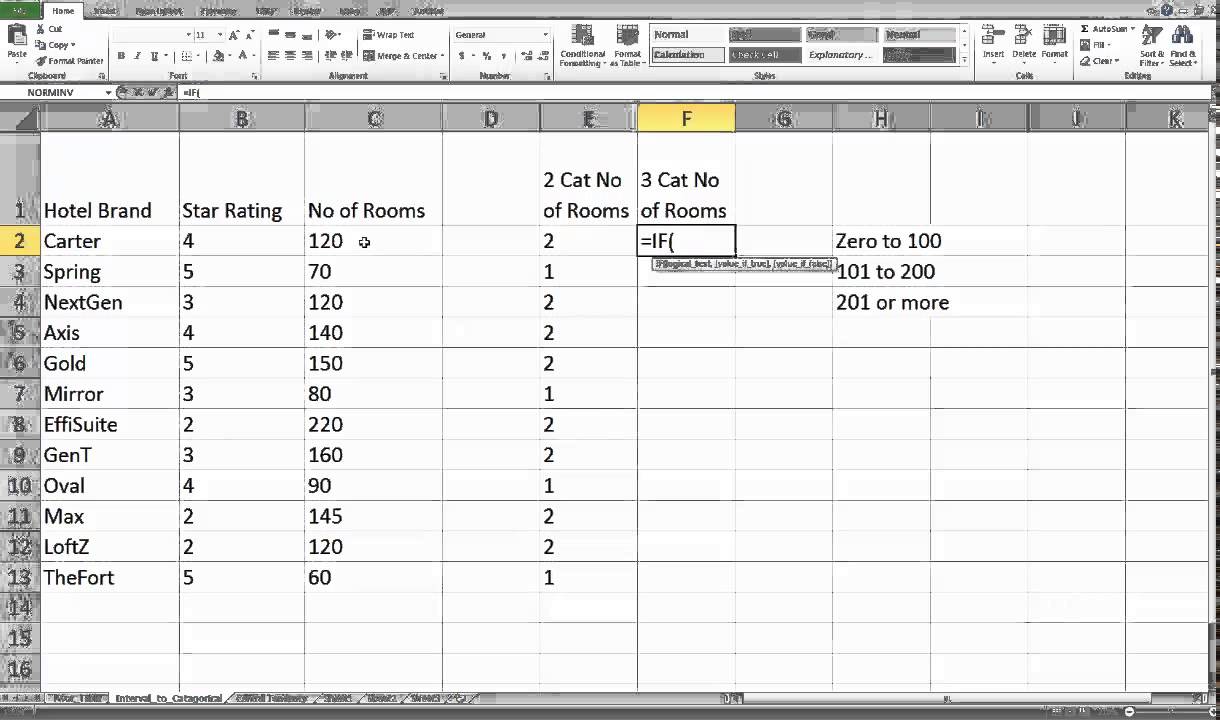

When entered as predictor variables, interpretation of regression weights depends upon how the variable is coded. Their use in multiple regression is a straightforward extension of their use in simple linear regression. The "b" values are called regression weights and are computed in a way that minimizes the sum of squared deviations.Ĭategorical variables with two levels may be directly entered as predictor or predicted variables in a multiple regression model. The prediction of Y is accomplished by the following equation: Multiple regression is a linear transformation of the X variables such that the sum of squared deviations of the observed and predicted Y is minimized. This recoding is called " dummy coding." In order for the rest of the chapter to make sense, some specific topics related to multiple regression will be reviewed at this time. These steps include recoding the categorical variable into a number of separate, dichotomous variables. When a researcher wishes to include a categorical variable with more than two level in a multiple regression prediction model, additional steps are needed to insure that the results are interpretable. Multiple Regression with Categorical Variables

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed